Optimizing an AI agent's context: eco & logical

At mAIstrow, we have an expression that comes up often in our internal conversations: eco & logical. It's a play on words coined by Vincent, a mutual friend, that says two things at once. Economical, because we work with limited resources and have always been attentive to what we consume. Ecological, because every billed token corresponds to real compute, somewhere in a datacenter, using energy and emitting CO2. And logical, because it turns out the two are aligned: the less context you waste, the more efficient your agent becomes, both on output quality and on speed.

This conviction isn't just a frugality preference. It's backed by a technical observation I see confirmed every time I work seriously with an agent: the bigger the context window, the more the model loses efficiency. The degradation starts well before the model's theoretical limit. Past a certain volume, attention drifts, older instructions become blurry, the model mixes elements that should have stayed separate. You pay more to get a worse answer.

In this article, I describe the three techniques that make most of the difference in my daily practice. All three derive directly from Principle 6 of the parent article: design for cost from the start. This isn't late optimization, it's a design discipline.

Technique 1. A CLAUDE.md that says what to load, and above all what not to load

The first thing Claude Code does when entering a project is explore the tree structure. Without guidance, it will read what seems relevant: README files, configuration files, the largest files at the root. This exploration is useful in a small project. In a large project, it's an economic disaster.

The solution is a CLAUDE.md file at the project root. This file is not a general intent manifesto, it's a loading directive. It explicitly tells the agent which files to read at startup, and above all which files to ignore until you explicitly mention them.

A minimalist example:

# Project: MyApp ## Always load - README.md (project overview) - docs/architecture.md (system design) - src/config.ts (configuration reference) ## Load on demand - src/**/*.ts (only when working on specific modules) - tests/**/*.ts (only when writing or debugging tests) - data/**/*.json (only when explicitly referenced) ## Never load - node_modules/ - dist/ - build/ - *.log

The Always load section must stay minimal: three to five files maximum, the real entry points of the project. The Load on demand section describes patterns that the agent will only load when you specifically mention a matching file. The Never load section blocks noisy folders on principle.

The concrete effect: without this file, the agent easily loads five to ten thousand tokens of context at each session start, most of which is useless for your current task. With this file, it reads three to four essential files, a few hundred tokens, and stops. On a project with many files, the reduction factor is significant. I won't give a precise number because it depends entirely on your project structure, but the difference is visible enough to justify spending five minutes writing this file before starting work.

Technique 2. Short sessions, because history gets expensive

Here's a point that's often misunderstood, and I want to explain it precisely because there are many approximations circulating on the subject.

When you chat with an agent, each new message you send doesn't only contain your message. It contains the entire conversation history up to that point. The system prompt, the previous messages, the previous responses, the tool results, everything is re-injected at each turn. It's the only way for the model to remember what happened, since it has no persistent memory between two requests.

The consequences are significant. If your conversation contains N turns and each turn adds on average K tokens (your message plus the agent's response), then request number N sends as input the equivalent of N × K tokens of history, plus your new message. The cumulative cost of the N requests in a conversation is therefore O(N²) in input tokens.

Concretely, that means a conversation that has lasted ten turns doesn't cost ten times one single request. It costs much more, because each turn carries the weight of all the previous ones. And if you let it run twenty turns, it's not twice the cost of ten turns, it's more like four times.

Prompt caching mitigates this problem, and I discuss it in the next technique, but it doesn't eliminate it. And above all, it doesn't address the second effect, which is independent of cost: quality degradation. The more history you stack, the harder it is for the model to keep its attention on what matters for your current task. It remembers what it did at the start of the conversation and tries to stay consistent with that, while your need may have evolved since. It mixes rules that applied to an old sub-topic with your current question. It becomes more cautious, more hesitant, more verbose.

The rule I've ended up adopting: new session as soon as I switch task. At minimum one new session per half-day, even if I'm still on the same project. It's counterintuitive when you come from an approach where "everything happens in one conversation", but this is where a large part of both cost and quality are decided.

A concrete number makes the rule easier to grasp: two 3,000-token requests are almost always better than a single 6,000-token request. Three independent reasons all point in the same direction.

First, the transformer's attention layer has a cost in O(n²) with respect to the number of tokens in the sequence. A 6,000-token prompt costs about 36 million attention units, while two 3,000-token prompts cost 2 × 9 = 18 million. Half, for the same useful work. The effect is modest at this scale because memory bandwidth dominates on short sequences in practice, but it's always in your favor.

Second, if your two requests share a stable prefix (the system prompt, your CLAUDE.md, your documentation index), prompt caching bills that prefix at about 10% of its normal cost on the second request. If your 3,000 tokens of context contain 2,000 tokens of shared prefix, the second request only pays about 1,200 tokens at full rate and 200 at the reduced rate, instead of 3,000. The savings are cumulative across the work session.

Third, the model stays focused. Its attention window doesn't spread across 6,000 tokens, half of which have nothing to do with your current task. That's the qualitative argument, and it's probably the most important of the three in the long run.

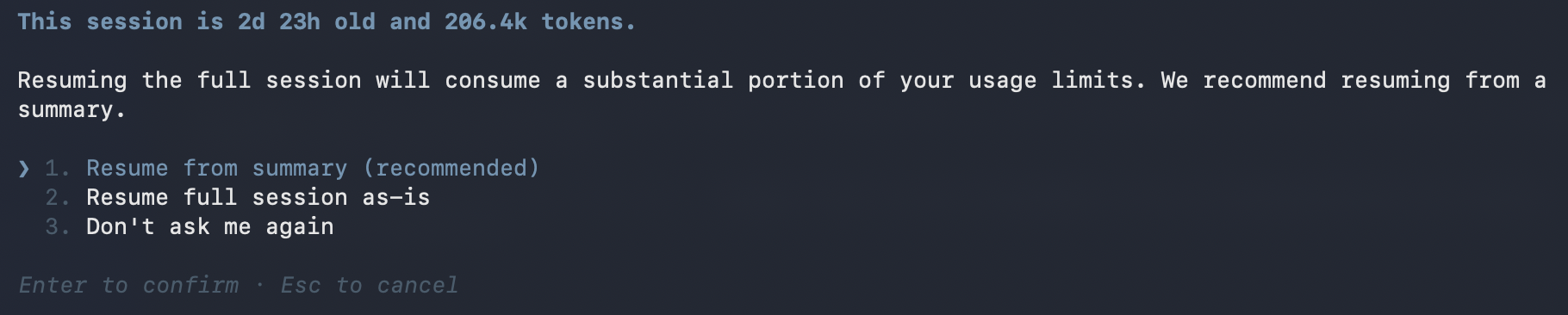

Claude Code offers to resume from a summary when the session gets expensive: here 206,000 tokens accumulated after almost three days.

Since the April 2026 update, Claude Code itself now ships an auto-compaction mechanism that triggers automatically as the context limit approaches. The system summarizes the conversation and continues with that synthesis as the new context. The /compact and /clear commands also let you do it manually, the first one keeping a summary, the second one starting from scratch. It's an explicit acknowledgment by Anthropic that the problem is real. But it's important to understand that auto-compaction is lossy: detailed instructions from the start of the conversation get replaced by a summary, and if they mattered for what came next, they can be lost. The official documentation actually recommends putting persistent rules in CLAUDE.md rather than relying on conversation history, which is exactly the discipline I'm defending here.

In other words, auto-compact is a safety net for when discipline fails, not a substitute for discipline. The right strategy remains the same: short sessions, persistent rules in files rather than in conversation, and clean restarts rather than fuzzy compactions.

And for this discipline to be practical without losing useful history, the method has to live outside the conversation. Which brings us to the third technique.

Technique 3. Finely split files, not monoliths

Many people build their agentic documentation as a large monolithic file: a five-hundred-line project.md containing everything: the project description, the rules, the personas, the examples, the playbooks, the technical decisions, the meeting notes. This file ends up being ten or fifteen thousand tokens. Every session where the agent loads it, that's fifteen thousand tokens weighing on the context, the vast majority of which has no connection to your current task.

The alternative is fine-grained splitting. Instead of one big file, you have:

docs/ INDEX.md # 20 lines, the map of the folder architecture.md # system view conventions.md # code rules domain/ entities.md workflows.md edge-cases.md agents/ MANIFEST.md support-agent/ ROLE.md playbook-escalation.md

The index is about twenty lines and lists the other files with a one-sentence description for each. It's the only file that's systematically loaded. The others are loaded only when they become relevant to the current conversation.

This logic is exactly the same as in programming: you don't load the entire standard library to use one function. You import what you need, period. AI agents follow the same logic, as long as you give them the means.

The index is the pivot of this organization. It must stay short, and above all it must be written to be read by the agent, not by a human browsing a table of contents. One sentence per entry, precisely describing when this file becomes relevant. Not "domain description", but "to load when discussing business rules, workflows, or customer edge cases". The agent reads this sentence and decides whether to load the file. The more precise the indication, the better the decision.

The bonus: prompt caching works for you

If you adopt the three previous techniques, you also benefit from an optimization that Claude does automatically and that most users don't know about: prompt caching.

The idea is simple. If your loaded files stay stable between two consecutive requests, the corresponding tokens are billed at about ten percent of their normal rate for subsequent requests. Anthropic keeps them in cache on the server side and bills them at a discount since the corresponding compute cost has already been paid.

This optimization is powerful, but it has a strict condition: the files must be strictly identical between requests. If you rewrite your CLAUDE.md at each session, the cache breaks. If your index changes by one line, the cache breaks for everything that came after it. The Claude Code leak reveals that their team monitors fourteen different vectors that can invalidate this cache, and that they've designed specific mechanisms to avoid it, notably the SYSTEM_PROMPT_DYNAMIC_BOUNDARY marker that separates the static cacheable part from the dynamic part.

The practical lesson for you: don't touch your reference files during a working session. Treat them like production code. Modifications happen between sessions, deliberately, not in the flow of work. It's a hygiene discipline that pays twice: it stabilizes the agent's behavior, and it makes the cache work for you.

Eco & logical

These three techniques, combined, transform the economics of using an AI agent. But the most important benefit isn't financial, it's qualitative. An agent with a well-managed context is an agent that responds faster, makes fewer mistakes, and stays focused on your task instead of drifting into adjacent topics.

That's exactly what I called at the opening eco & logical. Economical because you spend less. Ecological because you consume less compute, therefore less energy, therefore less CO2. Logical because it's also the configuration in which the model performs best. The three point in the same direction, which isn't always the case in the software industry where best practices sometimes contradict each other.

You just have to give up a reflex that's deeply ingrained in AI agent users: the idea that "the more information I give it, the better it understands". That's false. Past a certain threshold, the more information you give it, the less it understands. The skill isn't to give everything, it's to give exactly what's needed, at the moment it's needed.

Going further

The next article in this series tackles the strategic counterpart of this discipline: how to ensure that the work you do for a given agent isn't lost the day you switch tools. The portability of the method, and what survives or doesn't survive a model change.

And if you're interested in the same cost obsession applied to inference itself rather than to usage, herbert-rs works on the other end of the chain: eliminating useless matrix operations, profiling each layer, and treating compute as a scarce resource. Eco & logical, all the way through.

AI agents methodology -- series

- What the Claude Code leak actually reveals: a methodology for building AI agents

- One source of truth per role: the silent trap of agentic systems

- Optimizing an AI agent's context: eco & logical

- Markdown as insurance: why your agentic method must outlive the model coming soon